During the 2024–2025 academic year, I decided to start writing detailed lecture notes on Topics in Information-Theoretic Cryptography (https://dacesresearch.org/infocrypto/). At the time, I was still thinking about research (e.g., in differential privacy, zero-knowledge, and information-theoretic security more broadly) while also preparing to transition into my faculty role at UIUC.

During that period, I started drafting early versions of the lecture notes that would eventually form the backbone of my Fall 2025 graduate course at UIUC. These weren’t intended to be a book (at least, not at first). They were simply my attempt to consolidate ideas I was using in my research (from fingerprinting lower bounds to statistical zero-knowledge to watermarking generative models) into a cohesive pedagogical narrative.

I experimented heavily with new ways to explain familiar concepts. I rewrote some proofs repeatedly. I paired classical topics (e.g., the One-Time Pad and Shannon entropy) with modern concerns such as data-market privacy risks, statistical attacks on machine learning models, and quantum-era cryptographic threats.

By the time I arrived at UIUC in Fall 2025, the notes had already grown into something far larger than a lecture packet. Teaching the course from these notes, and expanding them week after week, revealed that they could become more than supplementary material. Maybe these notes could become a full textbook?

This blog post is a reflection on that journey: how the material grew, what the book covers, and the many people and institutions who made it possible.

Reflections on the Process

1. Writing revealed connections I hadn’t noticed before.

Integrating ZK, DP, MPC, and quantum topics forced me to articulate the conceptual threads uniting them.

2. Student questions shaped the clarity of the exposition.

When multiple students struggled with the same definition, I rewrote it. Many of those improved explanations are now part of the book.

3. Compiling a textbook is a creative research act.

Several new lemmas, interpretations, and frameworks arose during the writing (simply from trying to explain concepts more cleanly).

Book Chapters

Compiling the textbook required reorganizing an entire semester’s worth of evolving lecture notes into a coherent structure that, I hope, could guide a reader from basics of probability to the frontiers of modern security. Below is a thematic overview of how the chapters came together.

1. Foundations

The book opens with a modern introduction to cryptography, revisiting the motivations, core goals, and roles of secrecy, randomness, and adversaries. It then transitions through a detailed review of probability (i.e., expectation, independence, conditional distributions) and into essential tools from information theory.

I believe this foundation anchors the rest of the text and supports the many advanced topics that follow.

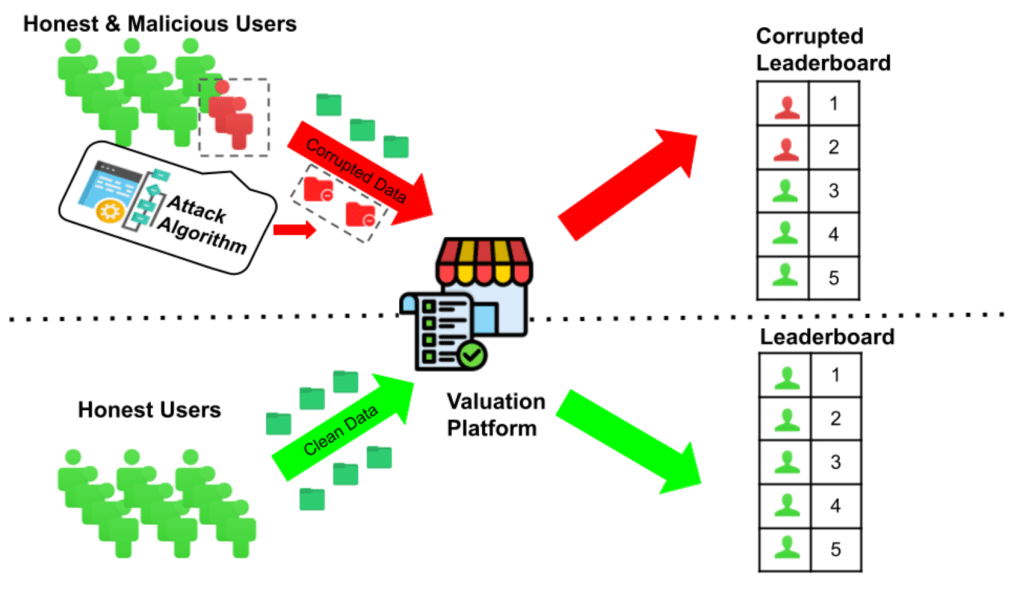

2. Attacks That Motivate the Theory

A distinctive early feature of the book is its chapter on attacks, including:

- reconstruction attacks

- chosen-plaintext and side-channel attacks

- valuation attacks in data markets

These examples provide students with an intuitive understanding of what must be defended and why theory matters.

3. Differential Privacy: From Basics to RDP and Hypothesis Testing

DP occupies several chapters, covering:

- Laplace and Gaussian mechanisms

- composition theorems

- Rényi DP

- DP-SGD

- framing DP through the lens of hypothesis testing

This was one of the most extensive parts of the rewriting process, as I attempted to unify multiple strands of the privacy literature into one narrative.

4. Lower Bounds in Differential Privacy

Another major contribution of the book is its treatment of lower bounds:

- packing arguments

- fingerprinting codes

- mutual-information-based bounds

- connections to group privacy

These tools help readers understand the inherent limitations of privacy guarantees.

5. Statistical Estimation, Testing, and Machine Learning Under DP

Later chapters connect DP mechanisms to classical statistical tasks:

- mean/variance estimation

- linear regression

- hypothesis testing

- utility tradeoffs

Each topic demonstrates how information-theoretic reasoning guides algorithm design.

6. Privacy in Distributed Systems: LDP, Shuffling, MPC, FL

This chapter weaves together local differential privacy and secure multiparty computation—two topics rarely unified in a single textbook:

- randomized response and k-ary LDP

- shuffle model and ESA

- MPC definitions and protocols

- secure summation

- federated learning with DP

7–10. Zero-Knowledge Proofs and Information-Theoretic Proof Systems

These chapters form a complete narrative arc:

- classical ZK protocols (3-coloring, GI)

- statistical zero-knowledge and SZK-complete problems

- multi-verifier SZK

- ZK over secret-shared data

- linear PCPs and IOPs

- polynomial commitments and inner-product arguments

11. Multi-Party Differential Privacy

A modern and emerging topic, combining cryptographic and information-theoretic privacy:

- adversary models

- distributed noise-addition protocols

- MPC-based DP

- simulation and composition theorems

This chapter, in my opinion, is one of the most forward-looking in the book. (I have some active research projects in this space.)

12. Quantum Cryptography

A full chapter on quantum mechanics and its cryptographic implications, featuring:

- the photon-polaroid experiment

- superposition, entanglement, and measurement

- Shor’s algorithm

- QKD (BB84)

- pure vs. mixed states

This chapter offers both intuitive and formal perspectives.

13. Watermarking, Steganography, and AI Content

The final chapter bridges classical information hiding with generative AI:

- perceptual models and robustness

- spread-spectrum and QIM watermarking

- deep-learning-based steganography

- watermarking of large generative models

- powered randomness used for sampling

This connects the field’s classical roots to current and future security challenges.

Acknowledgements

I developed the bulk of the course materials for the accompanying course during my postdoc, while supported by a Simons Junior Fellowship from the Simons Foundation (965342, D.A.). I am deeply grateful for this support; it gave me the intellectual space to design the course, think deeply about its structure, and begin drafting what would become this book.

This book would not have been possible without the support of my colleagues at UIUC, especially in the Department of Electrical and Computer Engineering. Many colleagues provided helpful feedback while I was developing the materials, attended some class sessions where I tested parts of the exposition, or offered valuable insights on how to structure complex topics such as zero-knowledge proofs, differential privacy, and information-theoretic analyses. Their encouragement and technical discussions greatly shaped the final form of the text.

I will, most likely, upgrade the textbook everytime I teach a subset of the topics covered!